AI visibility has become a real priority in B2B, and it’s not hard to see why. Per Forrester, 89% of B2B buyers use Generative AI during the buying process, making AI-driven touchpoints a core part of how purchasing decisions are now shaped.

Yet despite this urgency, the category remains messy, and most tools miss critical nuances that matter in B2B. Pure visibility tracking, which indicates whether you’re mentioned across 100 prompts, doesn’t tell you how AI understands your brand, which strengths it highlights for different stakeholders, or whether that visibility translates into the pipeline.

For B2B teams navigating long, multi-stakeholder buying cycles, these gaps matter.

The majority of AI-driven research happens without generating trackable citations. Buyers ask thousands of variations of the same question across different contexts, and different stakeholders evaluate based on different priorities such as cost, risk, usability, or integration fit.

This article evaluates platforms based on what they actually help you do: tracking perception, executing improvements, or connecting visibility to revenue, with a focus on what works for complex B2B cycles.

Key Takeaways

- AI visibility tools solve different problems across three dimensions: Track (how AI represents you), Execute (acting on insights through content and workflows), and Impact (connecting visibility to business outcomes). Most tools excel at one, few connect all three.

- For B2B brands, the majority of AI-influenced decisions happen before citations appear, when AI is framing problems, defining evaluation criteria, and filtering options based on how it understands your category.

- Per Demand-Genius research, just 16% of AI responses across complex journeys cite a brand directly, making common metrics like citation rate and share of voice poor measures of AI influence for B2B brands.

- Evaluate tools based on what your team actually needs to improve: baseline visibility, execution workflows, or the ability to shape how AI understands your positioning.

- Demand Genius connects tracking, execution, and impact to help you shape AI perception strategically, rather than just monitoring where you appear.

How Do These Profound Alternatives Compare?

| Tool | Best For | Track | Execute | Impact | Pricing | Trial |

| Demand-Genius | B2B brands needing to shape AI perception across tracking, execution, and impact | Very Strong | Moderate | Very Strong | Mid-High (starts at ~$99/mo) | Yes (free audit) |

| Conductor | Enterprise content and SEO teams that need integrated operations | Strong | Strong | Moderate | High (custom enterprise) | Yes (3-week trial) |

| AirOps | Teams scaling content production through automated workflows | Moderate | Strong | Moderate | Mid-High (task-based usage) | Yes |

| Quattr | Enterprises needing unified AI visibility with automated execution workflows | Strong | Strong | Strong | High (enterprise, dedicated support) | Yes (free site analysis) |

| Semrush | Teams wanting comprehensive SEO and AI visibility tracking | Strong | Moderate | Limited | Mid (starts at $99/mo) | Yes (7-day free trial) |

| Ahrefs | Teams wanting backlink analysis and keyword research with AI visibility | Strong | Moderate | Limited | Mid-High (starts at $129/mo, $29 Brand Radar only) | Yes (trial available) |

| LLMPulse | Teams needing comprehensive AI visibility tracking to establish baselines | Strong | Limited | Limited | Low (starts at $53/mo) | Yes (14-day free trial) |

| Scrunch | Growth teams managing multi-brand or multi-region portfolios needing compliance-ready tracking | Strong | Moderate | Limited | Mid (starts at $250/mo) | Yes (7-day free trial) |

| Peec | Teams and agencies needing affordable AI visibility monitoring with flexible exports | Moderate | Limited | Limited | Low (starts at $103/mo) | Yes (7-day free trial) |

| Otterly | Teams wanting competitive benchmarking and Share of Voice insights | Moderate | Limited | Limited | Low (starts at $29/mo) | Yes (14-day free trial) |

Notes:

- Track, Execute, and Impact ratings are defined as:

- Very Strong: Industry-leading capabilities; beyond standard functionality

- Strong: Comprehensive capabilities

- Moderate: Partial support, but not a primary focus

- Limited: Minimal or no meaningful functionality

- Pricing converted to USD at current exchange rates (April 2026) for comparison purposes. Check vendor sites for local pricing.

What Profound alternatives should solve for B2B teams (beyond visibility tracking)

Tracking visibility metrics like Share of Voice or Citations alone doesn’t tell you whether you influenced how the problem was framed, how you were positioned against alternatives, or whether visibility translated into pipeline. What matters is whether you shaped the decision before it was made.

For B2B teams, this gap is particularly acute. Complex buying cycles span months and multiple research phases:

- Early-stage: Problem definition questions

- Mid-stage: Approach evaluation questions

- Late-stage: Vendor comparison questions

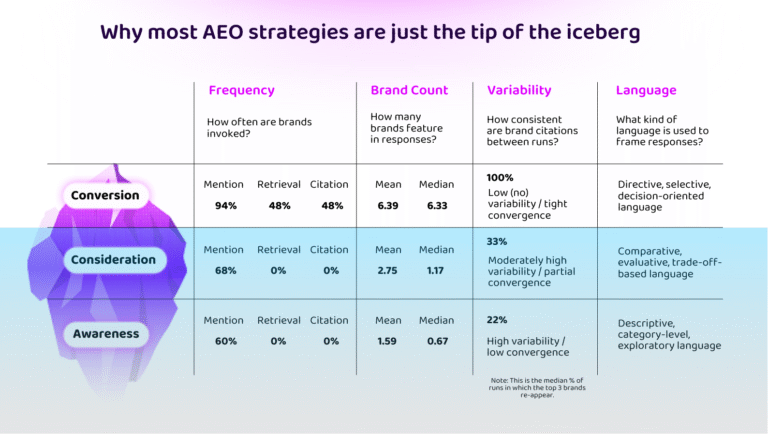

Our research shows that brand mentions and citations only appear at the conversion stage, with awareness and consideration stages (the majority of complex buyer journeys) featuring a 0% citation rate across the board. Put simply, if you track citations, you are tracking visibility in a small subset of interactions only.

You miss the earlier conversations where AI shaped how buyers framed the problem and defined their requirements. By the time you appear, the criteria are already set.

The Bottom Line: For B2B teams navigating 3-12 month multi-stakeholder buying cycles, understanding how AI views your strengths, weaknesses, and trade-offs across every interaction matters more than tracking where you’re mentioned. The goal is influence, not just visibility.

Most platforms address only part of this, leaving teams with visibility data but no deeper understanding of how AI represents them to potential buyers, no clear path to action and influence, and no means of measuring impact against the metrics that matter to them, such as pipeline and revenue.

A more useful way to evaluate alternatives is across three dimensions: perception, execution, and impact. Together, these define whether a tool helps you observe AI behavior or actually influence and measure it.

Why tracking AI mentions alone doesn’t work

By the time a brand appears in a response, AI has already framed the problem, defined evaluation criteria, and narrowed the consideration set. Tracking mentions at that point only captures the outcome, not how the decision was shaped.

Our original research shows that most B2B buyer education happens in what we term “Dark AI”, the conversations beneath the surface where problems are framed, and requirements are built, without generating any trackable citations or referrals.

This makes existing metrics useful for visibility, but limited for understanding influence. It tells you where you appeared, not how you were evaluated or why you were included. That’s why evaluation needs to go deeper.

What AI visibility tools should actually measure

Effective tools need to go beyond tracking and cover three connected areas: perception, execution, and impact.

The table below shows what each dimension measures and what separates surface-level tracking from strategic influence.

| Dimension | What it measures | What separates good from basic |

| Perception | How AI understands and represents your brand across queries | Does it track what the model thinks about you (perception), or just who it mentions (visibility)? Does it show how you’re positioned against alternatives, and whether AI surfaces your strengths to the right stakeholders? |

| Execution | How teams act on gaps and improve representation | Does it help you maintain quality, currency, and consistency at scale, or just churn out low-quality content? Can you deploy changes without rebuilding workflows? |

| Impact | How visibility connects to pipeline, conversions, and revenue | Does it contextualise “AI metrics” against “business metrics”? Can you trace visibility changes to actual pipeline movement and prove ROI? |

Focusing on just one dimension creates predictable gaps:

- Tracking visibility without understanding perception? You know you’re mentioned, but not whether you’re positioned correctly.

- Creating new content without maintaining existing content? You’re building up content debt across your library.

- Measuring visibility, but not business impact? You’re optimizing vanity metrics instead of revenue.

With that in mind, here’s how the 10 best Profound alternatives in 2026 stack up across these dimensions.

The 10 best Profound alternatives in 2026

The platforms below vary widely in scope and focus. Some are built for agencies managing multiple clients, others are designed for in-house teams running complex buying cycles, and a few prioritize affordability and simplicity.

What matters is matching the tool to how your team actually works, whether you need baseline visibility, execution workflows, or the ability to shape perception. Here’s how the top alternatives compare:

- Demand-Genius: Single solution for B2B brands to monitor and influence how AI presents them and connect it to revenue outcomes.

- Conductor: Enterprise content with integrated AI visibility tracking

- AirOps: Content engineering platform for scaling production with AI visibility

- Quattr: Execution-led SEO/AEO with automated CMS deployment

- Semrush: Comprehensive SEO toolkit with AI visibility layer

- Ahrefs: Backlink and keyword research with Brand Radar for AI tracking

- LLMPulse: Multi-model visibility tracking at accessible pricing

- Scrunch: Visibility tracking + Agent Experience Platform for machine-readable content

- Peec: Lightweight multi-model tracking with flexible exports

- Otterly: Competitive benchmarking via Share of AI Voice metric

Now, here’s what each tool actually does and where it fits.

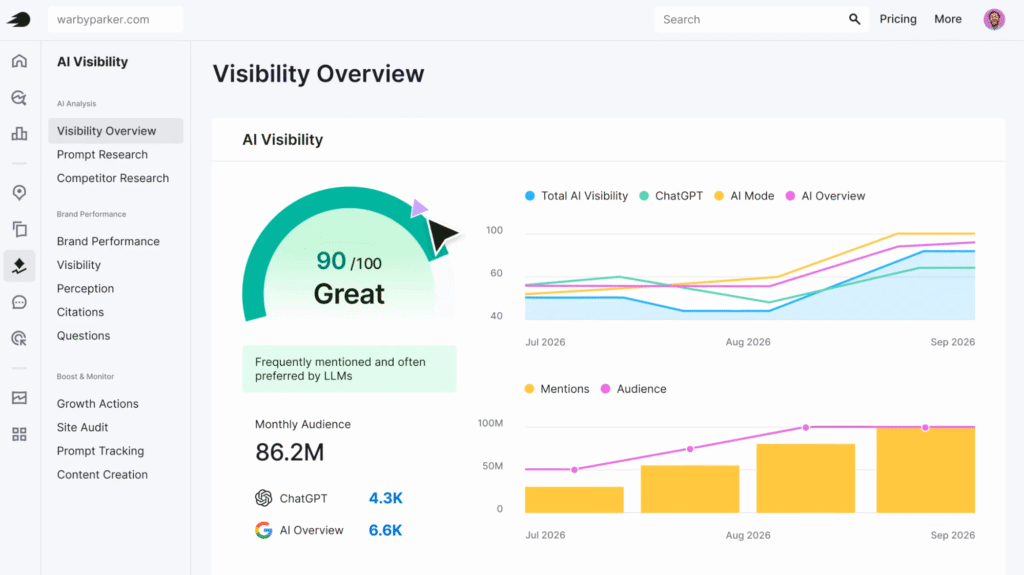

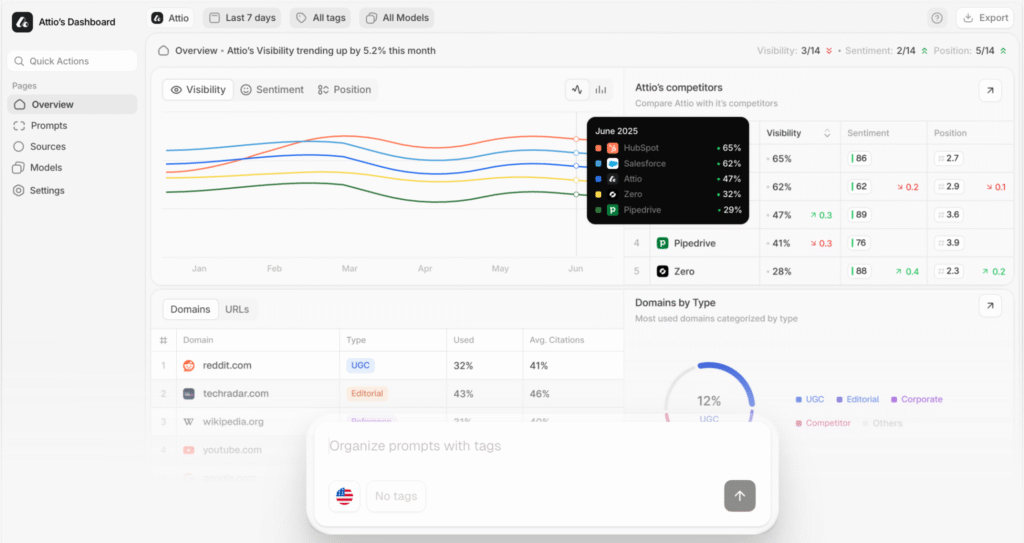

Demand-Genius

Unlike pure visibility tools, Demand-Genius connects tracking, execution, and impact in one platform. It shows how AI perceives your brand, identifies why that perception exists, and ties visibility to pipeline outcomes.

Best for: B2B brands in competitive categories where being correctly understood and positioned by AI throughout long, complex buyer journeys matters more than just being mentioned.

Particularly useful for teams managing large content libraries, dealing with positioning complexity, or needing to prove AI’s impact on revenue.

The platform tracks perception across major LLMs, identifies content debt (gaps between current positioning and published content), and monitors competitive narratives in real-time through AI agents that run continuously across your library.

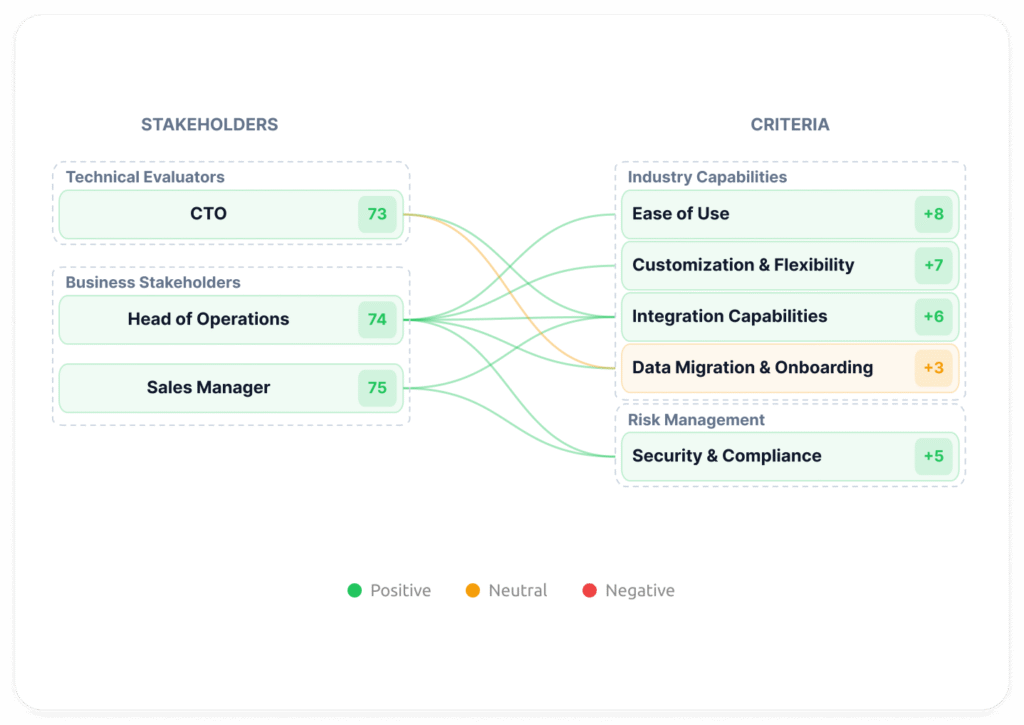

A key differentiator is stakeholder mapping, which tracks how AI positions your brand differently for each buyer persona.

Since B2B buying cycles involve multiple stakeholders with different priorities, understanding whether AI surfaces the right strengths to the right people is critical. As co-founder Tom Rudnai told Martin SFP Bryant of PreSeed Now, most teams produce content “based on hunches” rather than “strategically identifying specific questions or jobs to be done for your buyer.”

The platform maps perceived strengths and weaknesses against what each stakeholder actually cares about, replacing hunches with intelligence.

How it helps:

- Shape AI understanding proactively through continuous content intelligence and positioning alignment

- Identify why AI misrepresents you via content debt analysis: outdated pages, contradictory messaging, narrative gaps flagged at scale

- Map stakeholder fit to ensure AI surfaces your strengths to the buyers who care about them most

- Monitor competitive positioning to see how competitors frame the category and where you can differentiate

- Connect visibility to revenue by tracking which content drives pipeline, not just traffic

- Deploy AI agents for ongoing audits (ICP fit, information gain, brand voice consistency)

Jamaica Lancee at Sensat describes it as “like Clay but for Marketing/Content teams” for its AI agent-driven content intelligence. Tiered pricing starting at $99/month for Intelligence (monitoring and content optimization) or $119/month for Performance (revenue attribution and buyer journey analytics).

Trade-offs: No internal content creation workflows, so if you need AI to generate content at scale, this isn’t the platform. Connects all three dimensions rather than focusing on one, which requires strategic alignment across positioning and content teams.

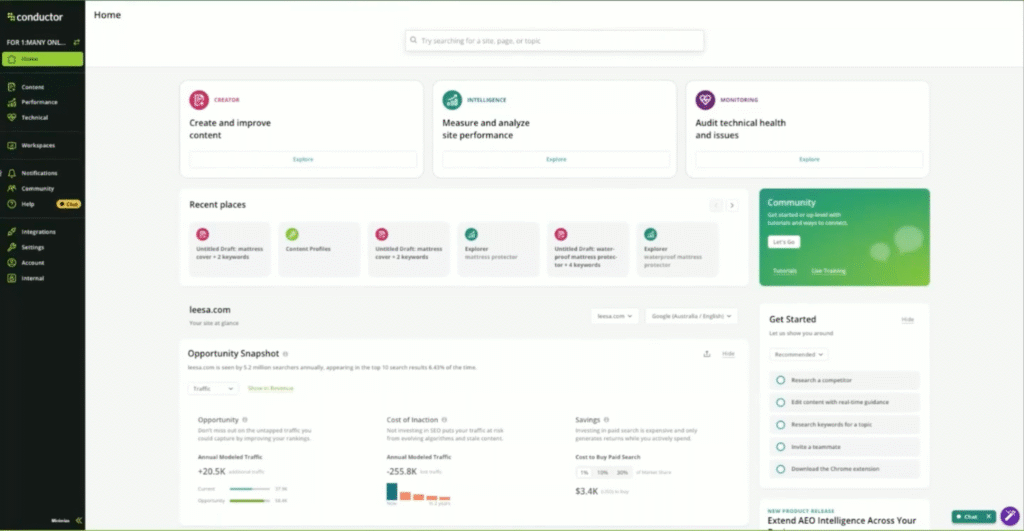

Conductor

Conductor combines AI visibility tracking with content creation workflows and technical monitoring in one enterprise platform, built for large-scale content operations.

Best for: Enterprise content and SEO teams who need AI monitoring integrated with content production and technical oversight.

The platform tracks where your brand appears across ChatGPT, Perplexity, and Google, then helps execute improvements through its AI Writing Assistant (which references competitive analysis and enforces brand guidelines) and continuous site health scanning.

One user notes the “setup was extremely easy, taking less than an hour,” while another highlights how it “reduces time from ideation to creation to publication by more than half.”

How it helps:

- Unify content operations by connecting AI visibility insights with content brief creation, writing workflows, and performance tracking

- Generate on-brand content at scale using AI assistance that references search trends while maintaining brand consistency

- Monitor technical health continuously with 24/7 website scanning and issue detection for enterprise sites

- Consolidate analytics across search insights, technical data, and AI citation performance

- Track which content drives AI citations and how citation context affects positioning

However, users also note friction, like “navigating the tool can be confusing at times,” and “onboarding new hires takes a little longer than it would with a basic, entry-level tool.”

Trade-offs: Learning curve due to feature breadth, custom enterprise pricing, and focus on content optimization after gaps are identified.

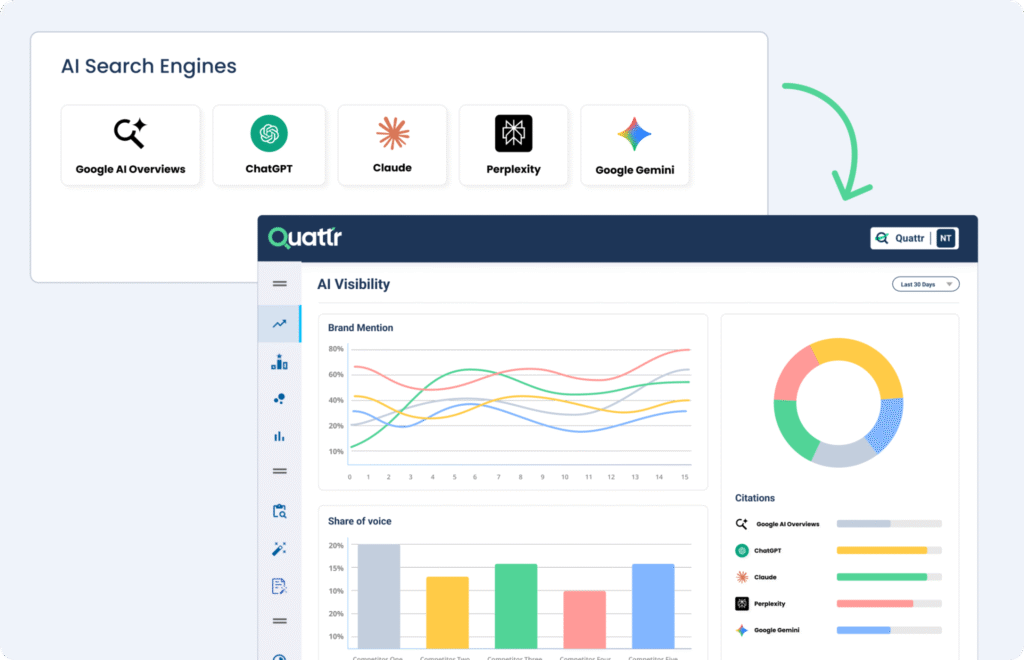

AirOps

AirOps positions itself around “Content Engineering,” building repeatable workflows for content creation and AI visibility rather than one-off generation. The platform combines AI search tracking with content production tools and brand governance systems.

Best for: Teams scaling content production through automated workflows while maintaining brand consistency.

The platform offers two layers: Insights (opportunity surfacing, prompt tracking, AI visibility monitoring) and Action (content workflows, Power Agents, brand governance).

Users report significant time savings: one agency saw “3x efficiency gains.” On the other hand, output accuracy requires review, as one enterprise user noted catching “incorrect stats, wrong product details, and facts that needed a second look before publishing.”

How it helps:

- Build repeatable content workflows using Power Agents and custom automation for scaling production

- Track AI visibility and prompt performance across ChatGPT, Perplexity, and other LLMs

- Govern brand voice and context through Brand Kits that maintain consistency across outputs

- Surface content opportunities through competitive analysis and gap identification

- Integrate with existing tools via API connections to content management systems

The learning curve is acknowledged across reviews: “steep but worth it once you invest the time.”

Trade-offs: Initial onboarding investment required. Pricing burns through credits faster than expected with heavy use. Requires human review for accuracy.

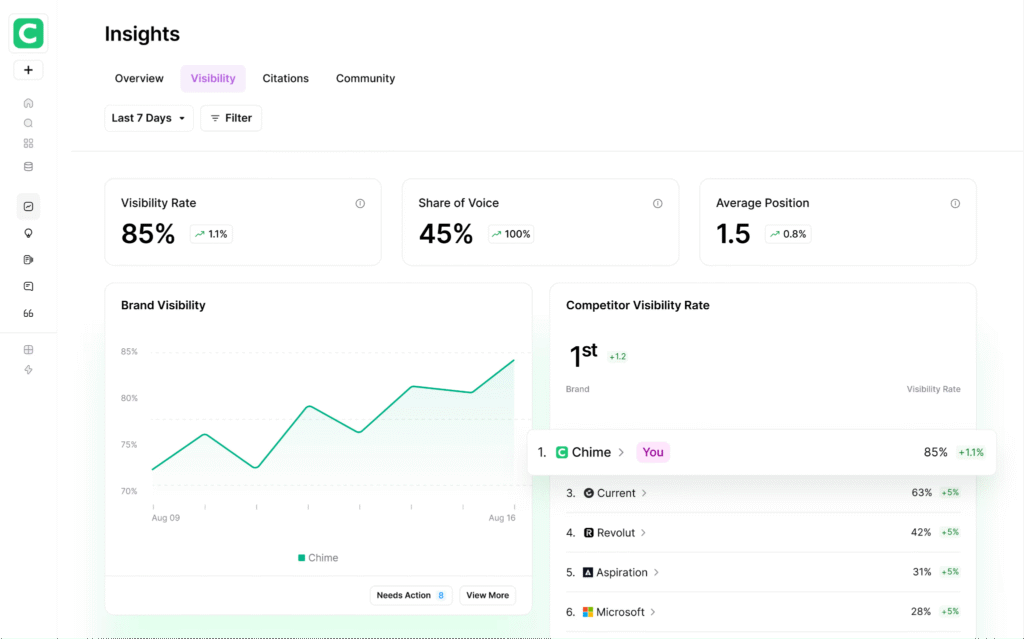

Quattr

Quattr connects AI visibility tracking with automated content execution and CMS deployment. The platform monitors where your brand appears across ChatGPT, Perplexity, and Google AI Overviews, then uses its GIGA AI agent to optimize content and push changes directly to WordPress.

Best for: Enterprises needing unified AI visibility tracking with automated SEO and AEO execution workflows at scale.

The platform integrates first-party data from GSC, GA4, and Adobe Analytics to show which content drives rankings and citations, then automates fixes through autonomous internal linking, content enhancement, and direct CMS deployment.

One enterprise user notes that “pages optimized with GIGA started ranking higher within weeks” and began appearing in ChatGPT and Perplexity citations.

How it helps:

- Deploy optimizations automatically through WordPress CMS integration without development bottlenecks

- Scale internal linking using GIGA’s semantic understanding to build topical authority

- Generate optimized content faster by analyzing top-ranking competitors and drafting intent-aligned content

- Connect visibility to revenue by tying AI citations to GA4 conversions and business outcomes

- Validate changes with predictive scoring before publishing

Setup complexity also surfaces consistently in reviews. The platform is extensive, and one user notes, “integrating GSC, Adobe Analytics, BigQuery, and WordPress took coordination.” Concierge support is provided to help with onboarding.

Trade-offs: Setup complexity requiring integration coordination. Non-technical marketers need support during onboarding. Execution-first approach (answers “how to optimize” not “why AI positioned us this way”).

Semrush

Semrush layers AI visibility tracking onto its established SEO toolkit, monitoring brand mentions across ChatGPT, Perplexity, Gemini, and Google AI Overviews. The platform tracks 213 million LLM prompts and combines traditional SEO tools with emerging AI search metrics.Best for: Teams wanting comprehensive SEO and AI visibility tracking in one platform.

One user notes the platform “moves from thinking ‘I think this is the problem’ to knowing exactly what to do next.” It combines keyword research, competitor analysis, site audits, and backlink tracking with its AI Visibility toolkit, showing where brands appear in AI responses.

Pricing dominates user complaints, though. The term “expensive” appears in 540 G2 reviews, more than any other critique.

Data reliability also surfaces frequently: “numbers can be unreliable,” the same reviewer notes, recommending teams “use estimates directionally rather than as exact figures.”

How it helps:

- Track AI visibility across major LLMs with sentiment and share of voice analysis

- Research keywords and competitors using large databases (27B keywords, 43T backlinks)

- Audit technical SEO and monitor rankings across traditional and AI search

- Analyze competitor strategies, showing top-performing pages and content gaps

- Manage local listings, paid advertising, and social media from one dashboard

Coming to the learning curve, users acknowledge it feels “overwhelming at first,” though depth improves with use. Tiered pricing from $99/month (Base plan) to enterprise custom plans.

Trade-offs: High cost across all tiers. Learning curve due to feature breadth. Built for comprehensive tracking, not strategic influence or automated deployment.

Ahrefs

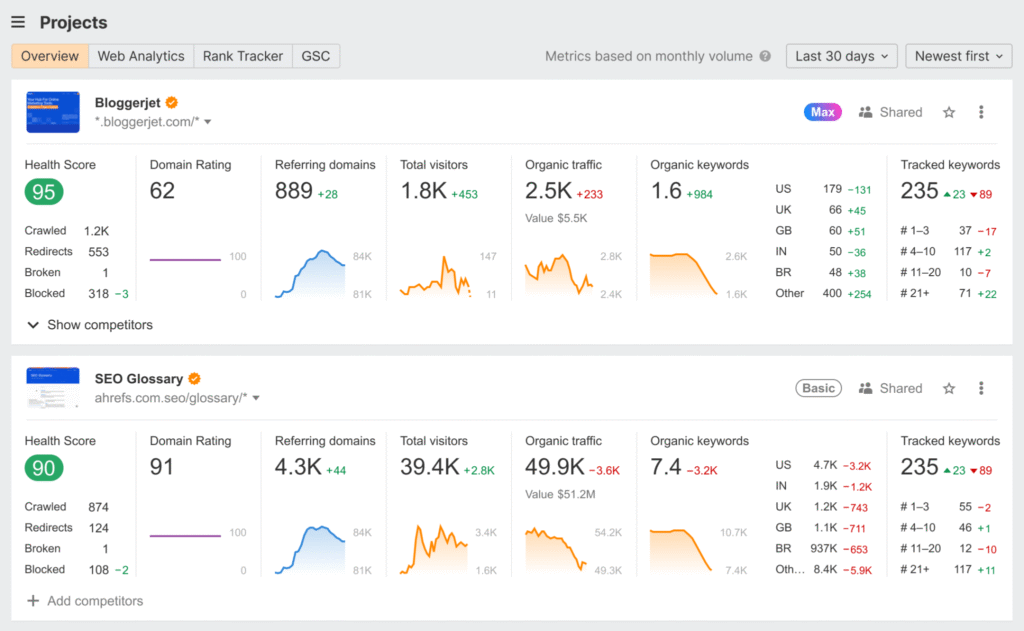

Ahrefs extends its SEO foundation (built on the industry’s largest backlink index) into AI visibility tracking through Brand Radar, which monitors brand mentions and citations across AI chatbots.

Best for: Teams wanting robust backlink analysis and keyword research with AI visibility tracking added.

The platform combines its crawler with 35 trillion backlinks and 110 billion keywords alongside Brand Radar for tracking AI citations. One user who’s been using it for years notes it “hasn’t disappointed me even once,” praising data consistency.

How it helps:

- Track AI visibility across chatbots with Brand Radar, monitoring brand mentions and sentiment

- Analyze backlinks with the industry’s freshest index and most active crawler

- Research keywords using extensive databases (110B keywords across 217 countries)

- Audit technical SEO and monitor site health with automated crawling

- Forecast traffic and connect GSC data for performance insights

Tim Soulo, Ahrefs CMO, positions Brand Radar as competitive with Profound. Pricing starts at $129/month for the Lite plan (full toolkit) or $29/month for Brand Radar standalone tracking.

With pricing surfacing repeatedly as a key friction point, users have also shared, “pricing can feel high for solo founders or small teams.” Credit-based limits also constrain heavy users.

Trade-offs: High cost, especially at scale. Credit-based usage limits exploration, and a learning curve for new users. Data updates can lag.

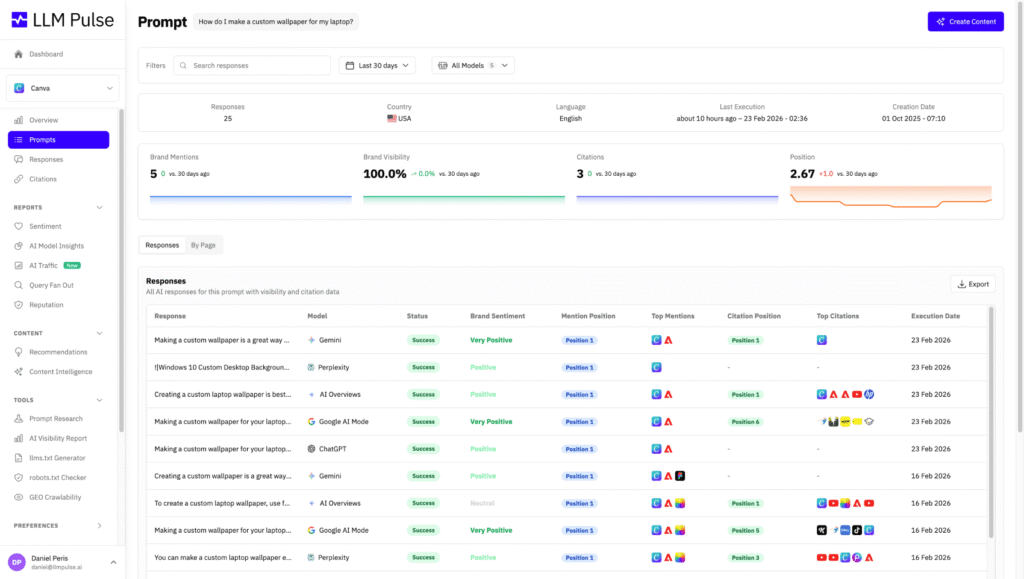

LLMPulse

LLMPulse focuses exclusively on AI visibility tracking across 10+ models, including ChatGPT, Perplexity, Gemini, and Google AI Mode, providing multi-model monitoring without content creation or strategic positioning features.

Best for: Teams needing comprehensive AI visibility tracking to establish baselines and monitor trends before investing in execution or influence systems.

The platform monitors brand mentions, sentiment, and competitive share of voice across major AI models weekly.

According to testimonials on their website, one user notes it filled a “huge blind spot given how many people are now using ChatGPT and Google AI Mode,” while another highlights how “the team moves fast, they’ve implemented improvements almost immediately.”

How it helps:

- Track AI visibility across 10+ models with weekly monitoring frequency

- Monitor competitive positioning through share of voice metrics, showing mention percentages

- Analyze sentiment trends to identify how AI characterizes your brand over time

- Integrate visibility data via Looker Studio connector, CSV exports, and API access

- Access affordable multi-model tracking starting at $53/month (Starter plan)

Used by 500 brands worldwide, including Swiss Marketplace Group, the platform also offers transparent pricing with a 14-day free trial and no sales calls required.

Trade-offs: Shows what’s happening, not why or how to influence it. No content workflows or strategic positioning intelligence. Smaller platform with less enterprise presence than established competitors.

Scrunch

Scrunch combines AI visibility tracking with its Agent Experience Platform (AXP), which creates machine-readable content versions to improve how AI systems parse and understand your site without disrupting human UX.

Best for: Growth teams managing multi-brand or multi-region portfolios who need compliance-ready tracking plus technical content optimization for AI readability.

The platform monitors brand visibility across ChatGPT, Perplexity, and Claude while AXP restructures content for optimal AI agent comprehension.

One G2 reviewer who tested multiple AI visibility tools calls Scrunch “by far the best group” in terms of ease of use. However, users consistently request better data synthesis.

One reviewer notes, “too much information about every prompt, would be great to just ask an AI tool” for analysis. Others mention limited historical data compared to established SEO platforms and difficulties using the API for custom reporting.

How it helps:

- Improve AI content comprehension through AXP that extracts and structures meta, titles, descriptions, and keywords for machine parsing

- Track visibility with enterprise security via SOC 2 Type II compliance, role-based access, and data APIs

- Scale across brands and regions with multi-brand, multi-website, multi-language support in a single dashboard

- Identify optimization opportunities through actionable tips, citation source analysis, and error detection for crawl blocks

- Monitor granular AI behavior showing how AI interprets your site and where citation opportunities exist

Used by 500+ companies, including Lenovo, Clerk, and Skims, it is SOC 2 Type II compliant with a 7-day free trial. Pricing starts at $250/month for the Core tier.

Trade-offs: Data limitations for comprehensive analysis. Limited historical data compared to established platforms. Reporting requires API expertise, and AXP focuses on technical content optimization.

Peec

Peec offers lightweight AI visibility tracking with straightforward monitoring across ChatGPT, Perplexity, Gemini, AI Overviews, and Claude, focusing on affordable baseline tracking with flexible exports and unlimited seats.

Best for: Teams and agencies needing fast, affordable AI visibility monitoring with strong sentiment tracking and flexible data export options.

The platform tracks brand visibility, position rankings, and sentiment across major models with citation-level analysis.

On the review front, users often note that the platform provides “quick, actionable insights,” while others also note feature gaps. One reviewer wishes for “more insights about how LLMs pick their sources,” and another mentions “user onboarding was somewhat difficult — I usually need personal onboarding.“

How it helps:

- Monitor sentiment at scale with citation-level analysis showing how AI characterizes your brand across contexts

- Scale across clients efficiently with unlimited seats, making it cost-effective for agencies managing multiple brands

- Export data flexibly via CSV and Looker Studio community connector for custom dashboards and client reporting

- Track competitive positioning by monitoring which prompts trigger competitor mentions versus your brand

- Access affordable multi-model coverage starting at €89/mo with daily tracking and prompt organization

Homepage claims 2,000+ customers, including Fortune 500 companies and startups like n8n and ElevenLabs. On pricing, the platform offers transparent tiers with a 7-day free trial.

Trade-offs: Tracking only tool, shows visibility gaps but doesn’t help execute fixes or shape perception. Costs scale with prompt volume. No workflow integration or execution automation.

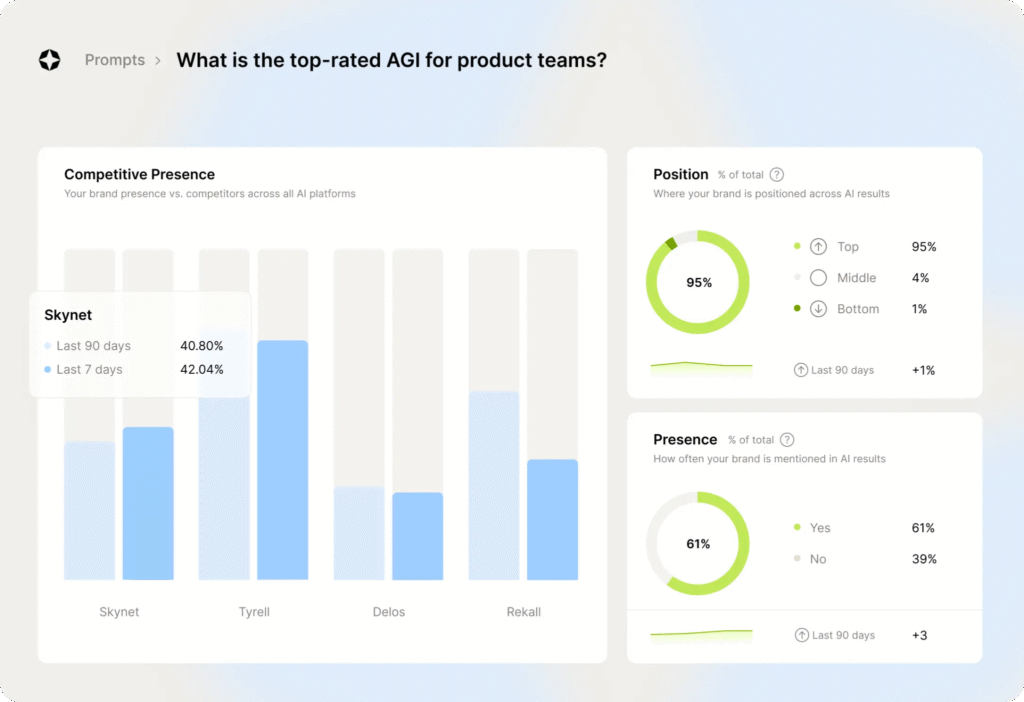

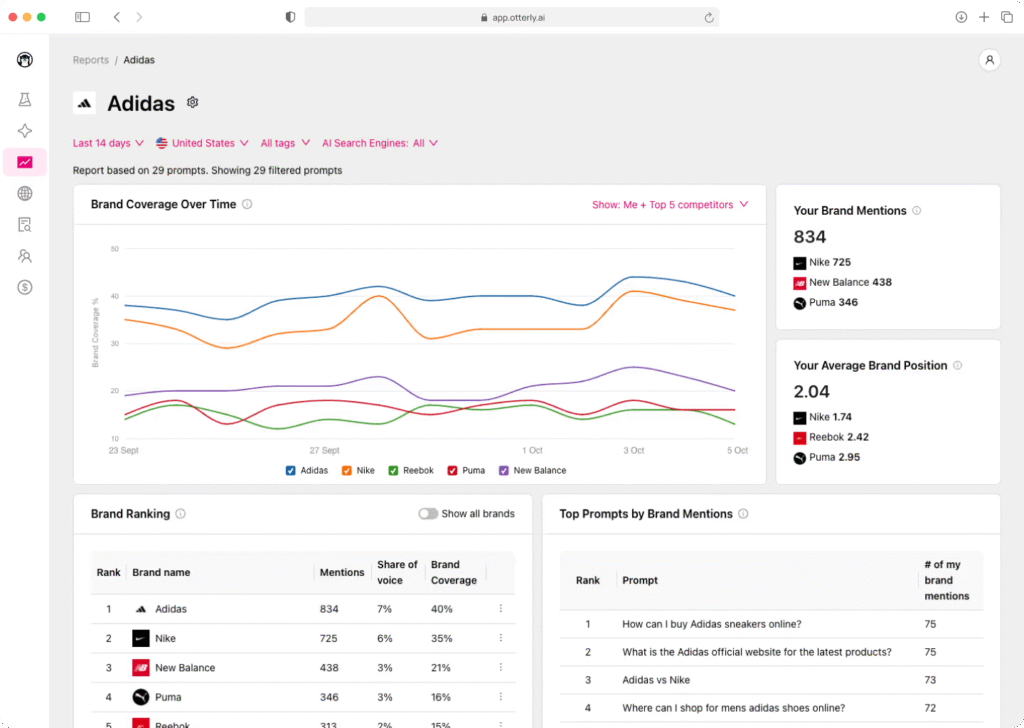

Otterly

Otterly emphasizes competitive benchmarking through its Share of AI Voice metric, quantifying your brand’s mention percentage relative to competitors across ChatGPT, Perplexity, Google AI Overviews, AI Mode, Gemini, and Copilot.

Best for: Teams wanting straightforward competitive positioning insights and Share of Voice benchmarking without execution workflows, content optimization, or strategic influence capabilities.

The platform monitors brand visibility, linked and unlinked citations, and competitive positioning across major AI platforms.

A G2 user mentioned, “implementation was incredibly straightforward — insights were available within one hour.” However, users acknowledge a learning curve. One reviewer mentions “a bit overwhelming when setting up custom alerts initially,” while another notes “the transition from traditional keywords to full-fledged prompts” requires adjustment.

How it helps:

- Quantify competitive standing through Share of AI Voice, showing your mention percentage versus competitors in specific query contexts

- Track regional performance with location-based monitoring to understand how AI represents your brand across different markets

- Monitor citation quality by tracking both linked and unlinked brand mentions to understand how AI references you

- Access an affordable entry point at $29/mo for initial visibility testing before committing to enterprise tools

- Navigate a clean interface with minimal complexity for teams new to AI visibility tracking

Homepage claims 20,000+ marketing professionals and recognition by Gartner as a Cool Vendor in AI for Marketing. Offers a 14-day free trial and an affordable entry point at $29/mo for initial visibility testing.

Trade-offs: Prompt limits feel restrictive (10 on Lite, 100 on Standard). Advanced features require the expensive Pro tier ($989/mo). Shows competitive position but doesn’t help influence or improve it. Pure tracking with no content workflows or strategic positioning capabilities.

These ten tools represent different approaches to AI visibility and influence.

Some focus on tracking where you appear, others help you execute improvements at scale, and a few measure business impact. Choosing between them depends on which capabilities align with where your team actually operates and what you’re trying to achieve.

The next section breaks that down.

How to choose the right Profound alternative for your team

The right tool matches where your team currently operates and what outcome you’re trying to drive.

AI visibility isn’t a single problem. You’re dealing with different gaps: understanding how they show up, acting on insights, or connecting that visibility back to revenue. The more effective approach is to match tools to your current stage.

Match tools to where you are

Start by identifying where your team currently operates. Are you struggling with baseline visibility, execution at scale, or proving impact?

The table below maps each situation to the right tool type.

| Your situation | What you need | Tools |

| B2B brand needing to influence how AI frames your category and positions you against alternatives | Platform connecting perception tracking, execution, and impact measurement to shape AI understanding strategically | Demand-Genius |

| Enterprise teams scaling content operations while tracking AI visibility | Execution platforms with integrated AI monitoring, workflow automation, and technical oversight | Conductor, Quattr, AirOps |

| Teams needing baseline visibility tracking to understand current AI representation | Pure tracking tools with multi-model coverage, competitive benchmarking, and flexible exports | LLMPulse, Peec, Otterly, Scrunch |

| SEO-first teams extending existing platforms with AI visibility capabilities | AI visibility layers that integrate with established SEO workflows and databases | Semrush, Ahrefs |

Most teams start by tracking to establish a baseline visibility, then move into execution to close gaps, and eventually focus on improving their positioning as AI becomes a primary decision layer.

Evaluate what matters for each type

Different tools require different evaluation criteria depending on what they’re built to do.

- Tracking tools – Don’t just judge on model coverage and refresh cadence. Ask whether the tool tracks true perception (how AI understands your positioning) or just visibility (where you’re mentioned). Does it show narrative impact, stakeholder fit, and positioning risks, or just citation counts and share of voice?

- Execution tools – Look for platforms that help you maintain quality, consistency, and currency across your entire content library, not just churn out new content efficiently. Strong workflow integration, CMS deployment capabilities, and governance controls matter, but only if they preserve brand voice and strategic coherence at scale.

- Impact and attribution tools – These platforms must deliver revenue tracking, pipeline visibility, and tie AI visibility to actual business outcomes. Look for conversion metrics that connect visibility changes to pipeline movement and prove ROI, not just vanity metrics.

- End-to-end platforms – These tools should connect perception, execution, and impact while providing positioning insights and narrative alignment across all three dimensions. They integrate tracking, workflow automation, and revenue attribution in a single system.

Consider cost, value, and support

Value for money consistently ranks as the top concern for teams evaluating AI visibility tools. Here’s how different pricing tiers compare.

| Price tier | Tools | Best for | Watch for |

| Low-cost entry ($53–$103/mo) | LLMPulse, Peec, Otterly | Testing the category, establishing baselines, and agency clients with limited budgets | Prompt limits, feature restrictions, costs scaling with usage |

| Mid-tier ($99–$250/mo) | Semrush, Ahrefs, Scrunch, Demand-Genius | Teams extending existing SEO investments or establishing comprehensive tracking with strategic positioning | Credit-based limits, data reliability concerns, learning curves |

| Enterprise (custom pricing) | Conductor, Quattr, AirOps, Demand-Genius. | Teams needing workflow integration, automation, or strategic positioning intelligence, or revenue attribution | Setup complexity, onboarding requirements, alignment needs across teams |

Setup time and support quality matter as much as price. Platforms offering concierge onboarding require coordination and integration across systems such as GSC, GA4, and CMS.

In contrast, pure tracking tools offer faster setup, often under an hour, but come with minimal hands-on support. More integrated platforms require alignment across positioning and content teams, but connect multiple dimensions within a single system.

Ask the right questions

The right choice becomes clearer when you frame it around your actual needs rather than feature lists.

- What’s broken right now: you don’t know where you appear, you can’t act on what you find, or you can’t prove it matters?

- Is AI describing you correctly, or is it framing you in ways that hurt your positioning?

- When you get insights, can your team act on them at scale, or do they stay as reports?

- Do you need to connect AI visibility to pipeline or revenue outcomes?

- Are you monitoring where you appear, or shaping how you’re understood?

- What stage are you willing to invest in: visibility, execution, or outcome measurement?

- What’s your budget, and does the tool’s value justify its cost at your scale?

Being mentioned by AI doesn’t guarantee being understood correctly or positioned favorably. How AI interprets your brand matters as much as whether it mentions you at all.

Once you’ve chosen a tool, the next challenge is implementing it without disrupting your existing workflows.

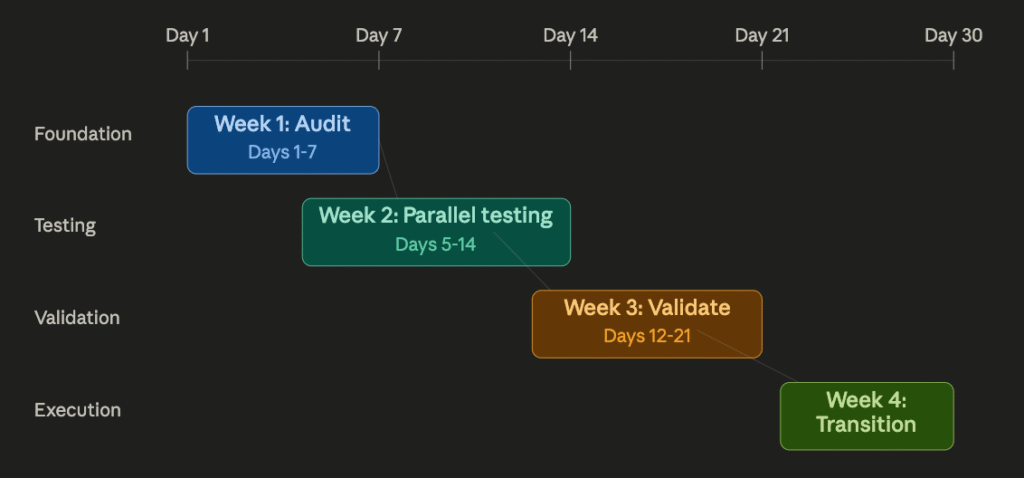

Migration playbook: Switch tools in 30 days

Switching from one tracking tool to another or from one execution platform to a similar one improves dashboards, but without clarity on what you’re trying to solve (visibility, execution, or impact), the switch ends up recreating the same problem elsewhere.

For B2B teams, this matters more: migrating without understanding whether you need better perception tracking, stakeholder fit analysis, or pipeline attribution means you’ll invest time in setup without addressing the actual gap.

A structured transition helps you evaluate alternatives properly, test them against real use cases, and move without losing continuity. Here’s how to approach it.

Week 1: Audit your current setup

Focus on understanding what you’re actually using today. Export your prompt library, dashboards, and reports; and document what you’re measuring and why.

Most teams discover they’re tracking more than they act on, which highlights where the real gap is.

Week 2: Run parallel testing

Set up 2 to 3 shortlisted tools using the same set of representative prompts. Run them for 7-10 days and compare data consistency, evidence quality, and usability.

For execution-heavy platforms with integration dependencies (like Quattr, AirOps, or Conductor), you may need to run parallel testing for 2-3 weeks to account for CMS integration, workflow setup, and team onboarding. Factor in this setup time when evaluating timelines.

The goal is to see which tool gives you insights you can actually act on, not just dashboards you’ll check once a week.

Week 3: Validate fit and usability

Look beyond surface metrics and test how well each tool holds up in practice.

Check consistency in visibility patterns, evaluate evidence depth, and test with actual team members. Review governance needs like API access and integrations.

At this stage, it should be clear which tools help you move from insight to action and which dimension each tool actually solves for. Some tools excel at tracking visibility but offer no execution workflows, while others automate content deployment but lack perception analysis. The best fit depends on where your gap lies, whether in tracking, execution, or impact.

Week 4: Finalize and transition

Make your decision based on data quality, usability, and strategic fit. Set up integrations and reporting workflows, and train your team and document core workflows.

Cancel your current tool and reallocate the budget where it drives more impact.

Conclusion

The challenge with AI visibility tools is that most of them measure the wrong part of the buyer journey. They track citations, but the real competition happens earlier when AI is framing problems, building evaluation criteria, and deciding which brands are even worth considering.

This is the Dark AI layer we discussed earlier: the conversations where buyers define what matters, long before they compare vendors. Most tools can’t influence this stage because they only track the outcome, not the framing.

Demand Genius approaches it differently.

The platform connects deep tracking (how AI perceives your brand across stakeholders), execution (closing content debt and narrative gaps), and impact (tying visibility to pipeline) in one system. Stakeholder mapping shows whether AI surfaces your strengths to the right buyers. Content debt analysis and AI agents identify why misalignment exists and how to fix it.

The difference is influence versus observation. If you’re only tracking where you appear, you’re reacting to decisions AI has already made. If you’re shaping how AI understands your positioning before buyers start comparing, you’re controlling the frame.

So, if your real problem is controlling how AI frames your category in the first place, Demand Genius connects tracking, execution, and impact in ways other tools don’t.

Tracking shows where AI mentions your brand.

Execution turns insights into content changes or workflow improvements. Impact connects those changes to business outcomes like pipeline or conversions, proving whether visibility actually drives results.

AI builds an answer by first understanding the problem, then filtering options based on what fits. By the time it decides which brands to cite, it’s already narrowed the field based on how it framed the question.

If you’re not influencing that framing, you’re trying to get included after the decision is mostly made.

Yes. Most teams start with tracking to establish baseline visibility, then expand into execution and impact measurement as needs grow.

The key is recognizing when tracking alone stops being useful and acting before progress stalls.

Content debt means gaps between how you want AI to understand your brand and what your published content actually says.

Outdated, inconsistent, or weak content shapes perception incorrectly, making it harder to influence AI behavior later.

Link visibility tracking with analytics platforms like GA4 to map how AI exposure influences traffic, engagement, and deal progression.

Instead of measuring mentions alone, track whether visibility changes correlate with conversions and pipeline movement.

Model coverage shows where you appear, but strategic features determine what you can do with that insight.

Coverage gives visibility, features enable action and measurement. Start with coverage to understand gaps, then prioritize features that help you close them.